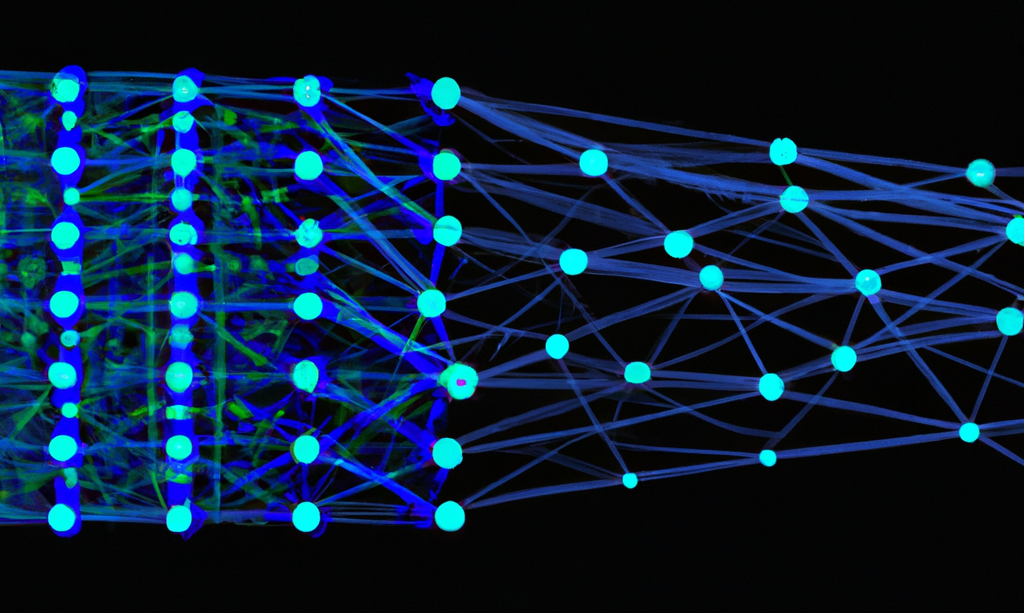

Project: Compare inner working of neural networks.

Description

With different initialization methods [1] or pruning

strategies [2], we can derive various neural networks (NNs) of the same

architecture but different weights. Previous work generally focuses on

exploring the performance and structure difference of NNs [3]. However, this is

insufficient to explain behaviors of different models. A few works combine XAI methods into the

comparison pipeline [4][5]. They generally compare the feature-based explanations

of different models derived from the same instances. In this project, we aim to

step further to compare the inner working of different NNs. How do learned

features evolve in different NNs? Do different NNs learn the same thing in the

same layer?

Requirements:

- Good programming skills, ideally with web

development experience (Javascript, D3.js).

- Understanding of NN models and their inner working, ideally also explainable method

- Visualization background

Please contact Linhao Meng (l.meng1@tue.nl) if you are interested in this project.

References

[1] Narkhede, M.V., Bartakke, P.P. & Sutaone, M.S. A review on weight initialization strategies for neural networks. Artif Intell Rev 55, 291–322 (2022). https://doi.org/10.1007/s10462-021-10033-z

[2] Blalock, Davis, et al. "What is the state of neural network pruning?." Proceedings of machine learning and systems 2 (2020): 129-146. https://doi.org/10.48550/arXiv.2003.03033

[3] Zeng, H., Haleem, H., Plantaz, X., Cao, N. and Qu, H., 2017. Cnncomparator: Comparative analytics of convolutional neural networks. arXiv preprint arXiv:1710.05285.

[4] X. Xuan, X. Zhang, O. Kwon and K. Ma, "VAC-CNN: A Visual Analytics System for Comparative Studies of Deep Convolutional Neural Networks" in IEEE Transactions on Visualization & Computer Graphics, vol. 28, no. 06, pp. 2326-2337, 2022. doi: 10.1109/TVCG.2022.3165347

[5] Meng, L., van den Elzen, S. and Vilanova, A. (2022), ModelWise:

Interactive Model Comparison for Model Diagnosis, Improvement and Selection.

Computer Graphics Forum, 41: 97-108. https://doi.org/10.1111/cgf.14525

Details

- Supervisor

-

Anna Vilanova

Anna Vilanova

- Secondary supervisor

-

Linhao Meng

Linhao Meng

- Interested?

- Get in contact