Project: Bringing Brain Surgery Images to Life in 3D

Description

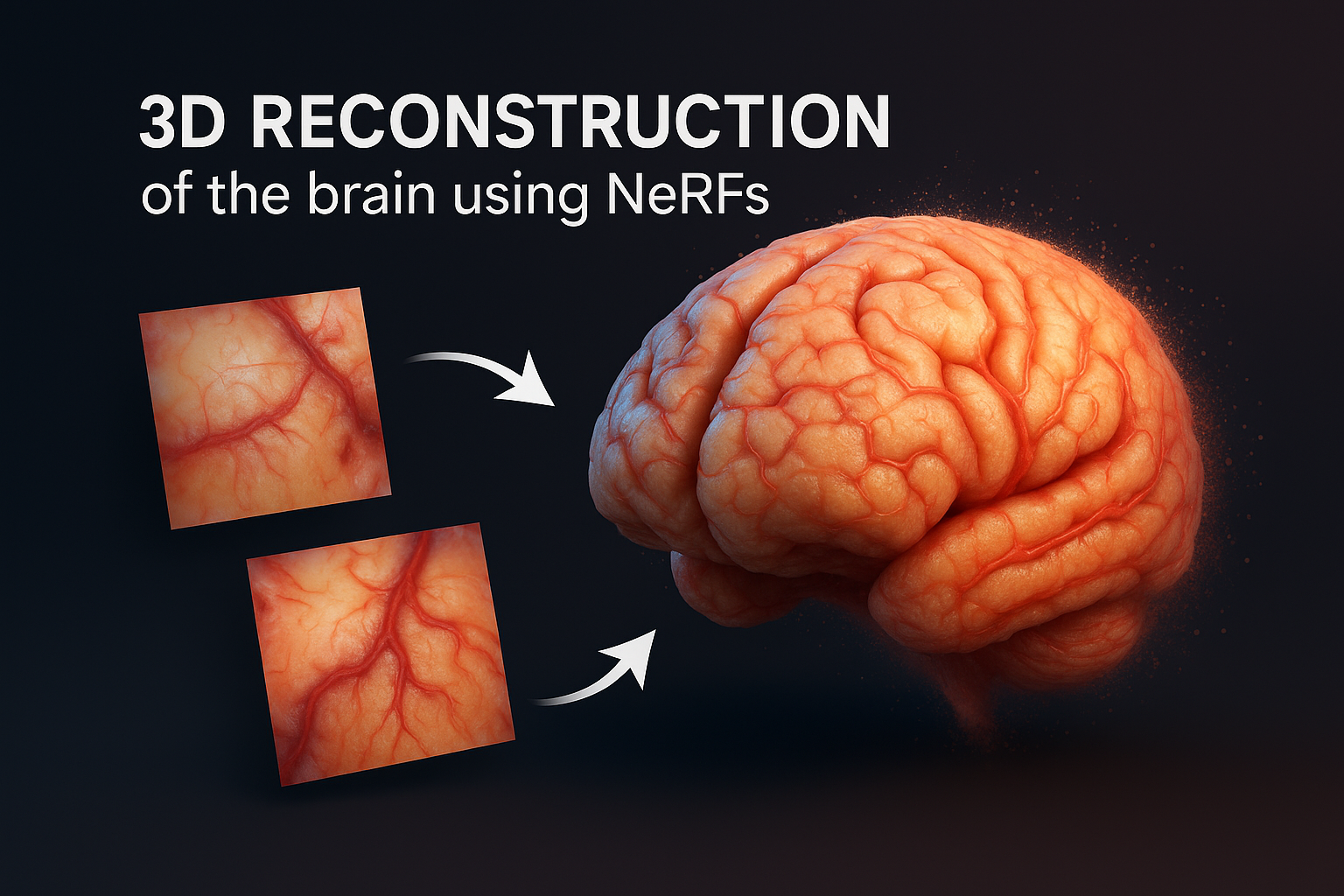

In neurosurgery, surgeons often rely on images taken during the operation to assess how much of a tumor has been removed and to plan the next steps. These images typically capture the surface of the brain — including the cortex, blood vessels, and some white matter — but are limited to 2D photos viewed on flat screens, making it difficult to fully understand the 3D structure of the surgical area.

This project explores how modern 3D rendering techniques like Neural Radiance Fields (NeRFs) and Gaussian Splatting can be used to turn these 2D images into interactive 3D visualizations. NeRFs and Gaussian Splatting are powerful tools that can reconstruct 3D scenes from multiple images, allowing users to move around and view the area from different angles.

The goal is to develop a system that can generate accurate 3D reconstructions of the surgical field from photos taken during brain surgery. This would give neurosurgeons a clearer, spatially continuous way to review the extent of tumor resection and better understand the surrounding tissue during and after the procedure.

Details

- Student

-

ZZZichuan Zhang

- Supervisor

-

Maxime Chamberland

Maxime Chamberland

- Secondary supervisor

-

Bram Kraaijeveld

Bram Kraaijeveld