back to list

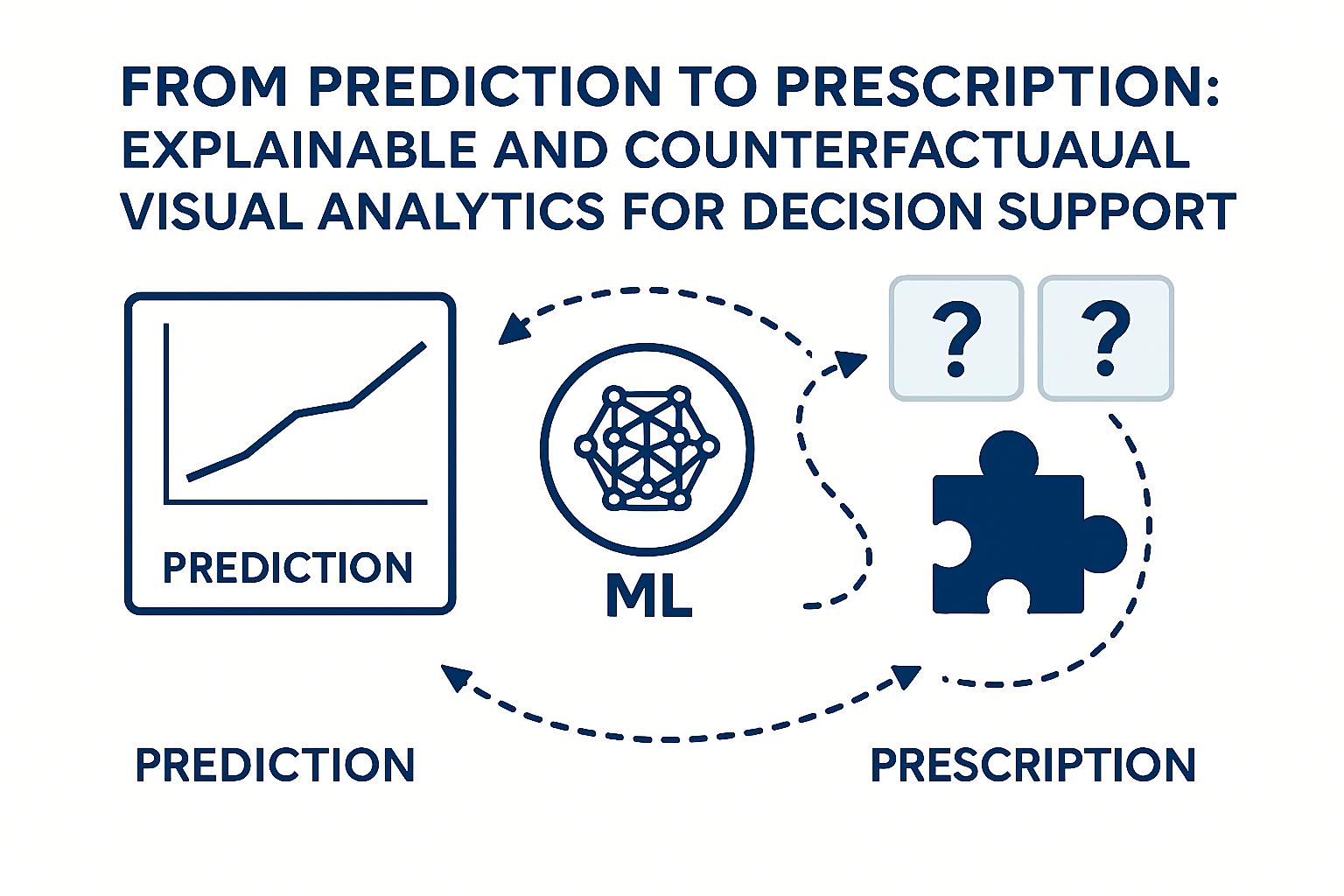

Project: From Prediction to Prescription: Explainable and Counterfactual Visual Analytics for Decision Support

Description

Predictive visual analytics systems are widely used to forecast future outcomes such as risks, failures, demand, or performance. These systems are increasingly applied across domains like energy systems, supply chains, finance, manufacturing, and urban mobility, where machine learning models predict what is likely to happen based on historical data. While these predictions provide valuable foresight, they often leave decision makers with an unresolved and critical question:

What should be done to change an undesirable predicted outcome?

In practice, analysts and domain experts must manually translate predictions into actions, relying on experience, heuristics, or external tools. This disconnect limits the usefulness of predictive analytics in real-world decision-making, particularly in complex domains where decisions involve trade-offs, constraints, and uncertainty. For example:

- In energy and sustainability, forecasts may predict peak demand or grid instability without clearly indicating which interventions (e.g., load shifting, storage usage, demand response) would mitigate the risk.

- In supply chain and logistics, models may predict delays or shortages, but do not prescribe operational adjustments such as rerouting, inventory redistribution, or production changes.

- In finance and risk management, risk scores can indicate exposure but do not provide transparent guidance on feasible portfolio rebalancing or risk‑mitigation actions.

- In manufacturing and predictive maintenance, failure predictions highlight problems but leave engineers to determine maintenance schedules or operational changes on their own.

- In urban planning and mobility, forecasts of congestion or emissions raise alarms without clearly comparing alternative policies or infrastructure interventions.

Prescriptive analytics aims to bridge this gap by recommending actions that can change predicted outcomes. However, many prescriptive approaches rely on opaque optimization pipelines or black‑box recommendations, making it difficult for users to understand why a particular action is suggested, what assumptions it is based on, and what trade‑offs it implies. This lack of transparency raises concerns about trust, responsibility, and appropriate human oversight.

This master's project investigates how visual analytics, explainable AI, and counterfactual reasoning can be combined to advance predictive systems into human‑centered prescriptive decision‑support tools. The project explores how users can interactively move from predictions (“what is likely to happen”) to prescriptions (“what actions could improve the outcome”), while maintaining transparency, user control, and contextual understanding.

Goals

The main goals of this project are to:

- Transform predictive visual analytics workflows into prescriptive visual analytics systems.

- Integrate explainable AI techniques that justify both predictions and recommended actions.

- Use counterfactual explanations to propose feasible interventions that improve outcomes.

- Design interactive visual interfaces that allow users to explore alternative actions, constraints, uncertainties, and trade‑offs.

- Derive design recommendations for responsible and trustworthy prescriptive visual analytics across multiple application domains.

Approach (High‑Level)

The project typically includes:

- Starting from a predictive model relevant to one of the target domains.

- Generating counterfactual scenarios that describe actionable changes leading to better outcomes.

- Integrating explainability techniques to clarify why predictions and prescriptions arise.

- Designing visual analytics interfaces that support “what‑if” reasoning and comparative decision making.

- Evaluating how prescriptive visual analytics affects understanding, trust, and decision quality.

Expected Outcome

The outcome of this project is a visual analytics prototype that demonstrates how predictive models can be extended into explainable, interactive prescriptive systems. The project will provide insights into how users interpret and reason with recommendations and offer design guidelines for integrating prescriptive analytics into real‑world decision‑support tools across energy, logistics, finance, manufacturing, and urban systems.

References

- Amir-Hossein Karimi, Gilles Barthe, Bernhard Schölkopf, and Isabel Valera. 2022. A Survey of Algorithmic Recourse: Contrastive Explanations and Consequential Recommendations. ACM Comput. Surv. 55, 5, Article 95 (May 2023), 29 pages. https://doi.org/10.1145/3527848

- Goyal, Y., Wu, Z., Ernst, J., Batra, D., Parikh, D., and Lee, S. (2019). Counterfactual Visual Explanations. Proceedings of the 36th International Conference on Machine Learning, in Proceedings of Machine Learning Research 97:2376-2384 Available from https://proceedings.mlr.press/v97/goyal19a.html.

Details

- Supervisor

-

Fernando Paulovich

Fernando Paulovich

- Interested?

- Get in contact